This week I had the pleasure of attending the AI for Earth summit at Microsoft HQ where the focus was on developing codes that run locally into APIs hosted on Azure that can be accessed from anywhere. I’ve got to admit I’m still developing my working knowledge of this process, but I am getting there, and I’ve just about got my conceptual understanding to an acceptable level. This post will be at a fairly basic level and I hope to follow up with more specific discussion of my own experience developing my ice surface classification code into an Azure API soon. As always, suggestions, corrections and refinements are most welcome!

API’s

API stands for Application Programming Interface – a name for any tool that enables a user to send an instruction or piece of information to a server and have some output returned. An API is a piece of code that acts as a broker that allows a user to interface with a program or database held on a server. This means that adapting code from a script that runs on a local machine into an API hosted on a server turns that code into a public resource that can be accessed and in some cases modified by other people. APIs are developed with scaling to many users in mind.

The standard architecture for API development is known as REST, which stands for Representational State Transfer. An API can be considered “RESTful” if it conforms to a few architectural norms such as having a uniform interface and separate client and server.

A RESTful API is generally implemented using HTTP syntax which has a vocabulary of commands sent from the client that create, query, modify or delete information in a server-side database. These are:

PUT: to update an existing resource

POST: create a new resource (e.g. append a row to a table)

GET: retrieve information without modifying it

DELETE: remove information from a resource

PATCH: update part of a resource

These commands can be sent to the API from any programming language. These commands are sent to the API via a URL. The URL is known as the “end-point” and it can be thought of as the address of the resource that the command is sent to. Sending a command to the URL is known as “making a request”.

A request using PUT, POST, PATCH or DELETE will also necessarily include some data to define what should be added, updated or deleted from the server-side resource. This is very often, but by no means always, JSON data.

So, an API is hosted on a remote server to control access to some resource, like a database or a script that calls a trained ML model. From the client side, a request can be made to the server using http commands. The request is handled by the API, which send a response back to the client. An example could be an API hosted on Azure that includes a trained computer vision model for identifying objects in images. The user can send the image to the API from a simple Python script that includes a PUT command to add the image to the server-side resource. The API can then run the trained model on the image and return an object ID to the client side. The user has therefore been able to use a trained machine learning model on their own image without needing access to large data resources and computing power or programming expertise to train their own model.

Images and Containers

To host a ML model as an API on Azure, the model and its dependencies need to be collected together into a discrete package. This package includes an operating system and all the information required to configure an environment and enable a model to run, along with the model script, trained model and associated codes required to run the script or interact with the API. The package is called an image.

The image is a repository containing all the resources needed to run an app. The image starts from a base image – for example the ubuntu base image could be downloaded from Docker Hub. Microsoft provides Python and R base images as well as images with Azure blob storage mounting functionality. These base images provide the fundamental tools required to run code on a server in a particularly language. Requirements for a specific app are built on top of the base image, creating a “child image”. A running instance of a particular image is called a container.

The container makes the model portable. It is very common for a programmer to write code that runs perfectly on their local machine but fails when run on a different machine because of subtle dependencies that can be awkward or time consuming to configure. Containers avoid this problem by including all the environment variables required to deploy a model in a discrete package that will run identically wherever it is deployed.

Docker

Docker is a tool for for creating and running containers. It is a command line tool with a simple syntax for collecting resources and pushing them to a repository. Two basic items are required:

a) Dockerfile: The docker file is a simple text file that acts as a list of instructions detailing precisely what the docker client should do in order to build an image in the container.

c) model code and associated data: The model code and usually a separate driver script are required. These are the guts of the actual application and they are run from inside the dockerfile.

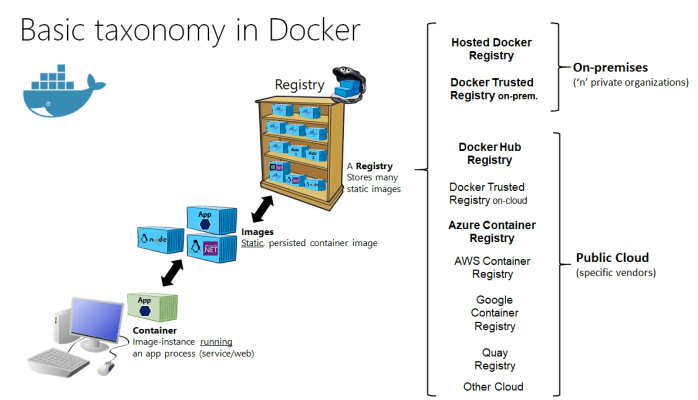

Container registries

Once an image has been built, it needs to be hosted somewhere on the server. A container registry is a repository for containers that sits on the server – in Azure this is simply called the Azure Container Registry. Individual registries are created by first allocating a resource group – a simple way to group relevant resources – and then associating that resource group with a specific registry hosted on Azure.

An image built locally is pushed up to the registry and then activated, creating a container instance running on Azure. At this point, the app is running and users with the URL can make requests using the http commands described above.

Scaling

Simply hosting an app in a registry is therefore fairly straightforward, but there are also issues of load balancing and security to consider. An example is blob storage access – from a local machine it is possible to define the blob access keys in-script or in a requirements text file, but this is a security risk for code in held publicly in the cloud. Instead, Azure Key Vault can provide secure blob access. Similarly, hits to an app hosted on Azure have a cost associated with them, payable by the account holder, so there may need to be a limit on the number of requests handled per unit time. On the other hand, the compute resources may need to be prepared for a high volume of requests. This is best achieved using Kubernetes, which spawns container images to order to ensure sufficient resources are available to handle large volumes of requests, but then de-allocates them when they are not required, reducing costs. Microsoft offers a smooth way to achieve this using the Azure Kubernetes Service.

Overview:

This has been a very light overview of the basic route to hosting an API on Azure. Later posts will include more detail on the nuts and bolts of how this is achieved. I’m blogging as I’m learning, so comments and suggestions are welcome!